Q: What’s scarier than an animation designed to employ the uncanny valley to terrifying effect? A robotic face designed to interpret the electrical signals given off by slime mold as human emotion. And also it’s wearing a little top hat and scarf, for some reason?

Watch the video, replete with a soundtrack of dissonant electronic drones (those are controlled by the mold as well), above.

New Scientist explains what’s going on, beyond a robot in a little top hat wincing and smiling as it tries to understand your puny, emotional human/slime ways.

Gale placed slime mould on a forest of 64 micro electrodes, along with some oat flakes. As the mould moved across the electrodes towards the food, it produced electrical signals, which Gale converted into sound frequencies.

And the face?

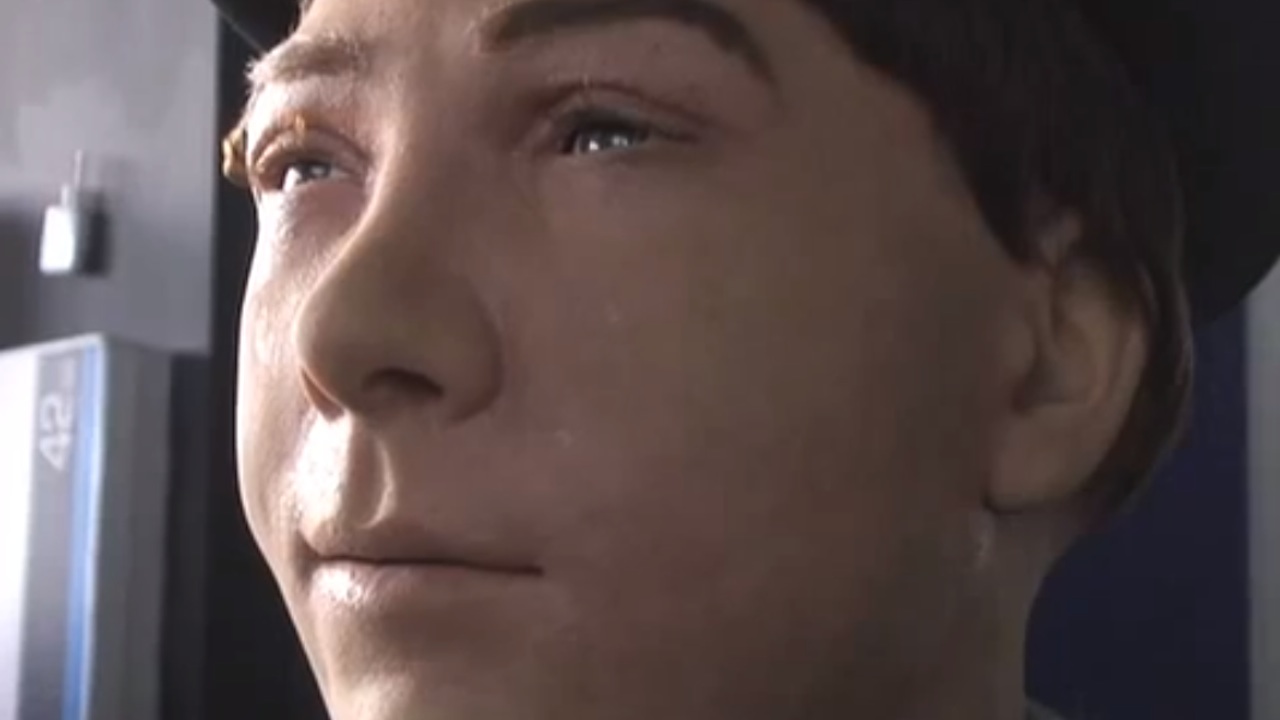

Using a popular psychological model, the team was then able to assign each sound chunk an emotion – anger would be negative, high arousal, for example, while joy might be positive, low arousal. Finally, the team then used an expressive, female Jules robot made by Hanson Robotics to re-enact the sequence of emotions while the soundtrack was played.